As everyone knows, terrible mistakes are often made in IT procurement. Anyone who has ever tried to apply some holistic thinking to institutional systems (“hey, let’s publish X from legacy database Y on the web!”) will have come across this. The closer you delve into data stores the more grim the story seems to become. Sometimes it’s because systems have been procured in the absence of any strategic guiding direction for IT. Often it’s departments who “just want to do X” and don’t care a stuffed fig about how else their data could be sifted, filtered or presented. Sometimes, it’s the unforgivable sin of IT departments thinking they understand users when their competancy is, obviously, in IT. Usually, though, it comes down to cost, and as long as The System delivers The Specific Project Requirement then all is ok with the world. This makes life hard for people like me who like to minimise duplication and see data flowing as freely as possible around the universe.

As everyone knows, terrible mistakes are often made in IT procurement. Anyone who has ever tried to apply some holistic thinking to institutional systems (“hey, let’s publish X from legacy database Y on the web!”) will have come across this. The closer you delve into data stores the more grim the story seems to become. Sometimes it’s because systems have been procured in the absence of any strategic guiding direction for IT. Often it’s departments who “just want to do X” and don’t care a stuffed fig about how else their data could be sifted, filtered or presented. Sometimes, it’s the unforgivable sin of IT departments thinking they understand users when their competancy is, obviously, in IT. Usually, though, it comes down to cost, and as long as The System delivers The Specific Project Requirement then all is ok with the world. This makes life hard for people like me who like to minimise duplication and see data flowing as freely as possible around the universe.

This isn’t a time to be pointing fingers (although I think any IT department who doesn’t insist on an API, a forward migration plan and some end-user research should be lined up against the wall and shot in the kneecaps..). Instead, I thought I’d focus on one particular case study where valuable data is held in an institution but not (usually) in any useful form: for museums, it’s the exhibit labelling.

In a perfect world, all exhibit labels would pass through the institutional content management system workflow: they’d be written, edited and signed off before being stored in some kind of XML repository ready for export to PDF for signage printing. This is roughly where NMSI is headed (one day, anyway), and I believe where the Tate are – certainly when it comes to printed publications, anyway..

In the real world of course, this often isn’t the case. Curatorial staff do their authoring in Word (or worse, some grimness like Wordstar 0.4 running on Windows 3.1..) and save their work to their local hard drives, ready to be wiped, lost, not backed up, corrupted. This isn’t a specific criticism of curators but more a criticism of the mode of thinking which often exists (or fails to exist) in content-rich institutions like museums: this content isn’t just for gallery – it’s for web, kiosk, mobile, intranet, email, posters, pdfs, marketing, and a whole bunch of other stuff you haven’t though of yet.

Back to the real world. On a recent visit to Swindon Steam museum (absolutely great, btw) I got wondering what you’d do if you came at this from the opposite direction: what if you had labels but your core data was held somewhere you couldn’t get at – probably the case for pretty much any museum with galleries over 5 years old…

A while back at the Science Museum we did some experimentation on exactly this problem, but I thought I’d try again. It’s terribly straightforward but it turns out it’s actually a pretty effective process:

1. Take photo of exhibit label

2. Do some minor image manipulation

3. OCR the text and save it

and, ultimately – 4. Devise some kind of XML schema for the data

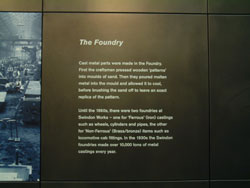

Here’s the original label (not real size – original pic was roughly 3000 x 2000px) :

Here is it cropped, sharpened, and inverted:

I then downloaded a bit of Freeware called Softi Free OCR and ran the OCR. I ended up with this:

The Foundry

Cast metal parts were made in the Foundry.

First the craftsmen pressed wooden ‘patterns’

into moulds of sand. Then they poured molten

metal into the mould and allowed it to cool,

before brushing the sand off to leave an exact

replica of the pattern.

Until the 1960s, there were two foundries at

Swindon Works – one for ‘Ferrous’ (iron) castings

such as wheels, cylinders and pipes, the other

for ‘Non-Ferrous’ (Brass/bronze) items such as

locomotive cab fittings. In the 1930s the Swindon

foundries made over 10,000 tons of metal

castings every year.

That’s not bad at all, just a few weirdnesses around the quotes which could be quickly corrected – certainly if you invested a few bucks with Amazon’s Mechanical Turk to iron out the edges.

That leaves the last bit – levering this into some kind of XML schema for gallery spaces. It’s getting late so I don’t have time now to do the research but it strikes me that this could be very useful. I’d imagine someone has done it already – let me know if so.

So. Conclusion: sometimes the dirtiest munging in the world can turn up some quite useful stuff. If your legacy stuff can be captured by screen grab, image, txt dump, screen scrape or otherwise, don’t panic – you’ll probably be able to do something with it. Next time, though, think holistically and save yourself the effort…